Introduction

Not so long ago there was a nice dataiku meetup with Pierre Gutierrez talking about transfer learning. RStudio recently released the keras package, an R interface to work with keras for deep learning and transfer learning. Both events inspired me to do some experiments at my work here at RTL and explore the usability of it for us at RTL. I like to share the slides of the presentation that I gave internally at RTL, you can find them on slide-share.

As a side effect, another experiment that I like to share is the “poor man’s video analyzer“. There are several vendors now that offer API’s to analyze videos. See for example the one that Microsoft offers. With just a few lines of R code I came up with a shiny app that is a very cheap imitation 🙂

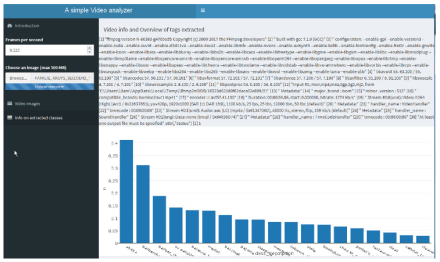

Set up of the R Shiny app

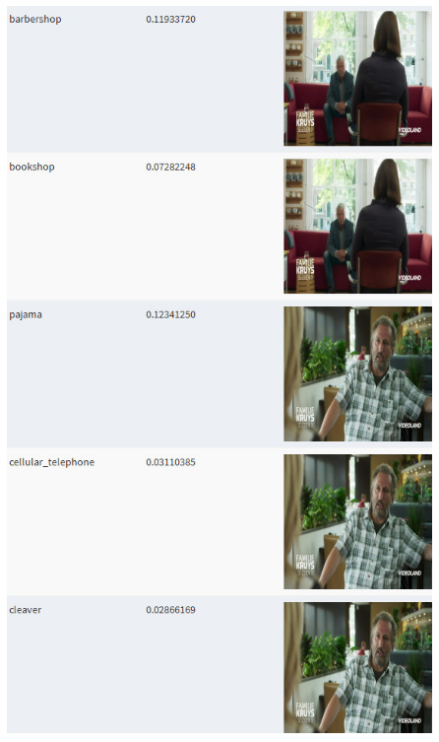

To run the shiny app a few things are needed. Make sure that ffmpeg is installed, it is used to extract images from a video. Tensorflow and keras need to be installed as well. The extracted images from the video are parsed through a pre-trained VGG16 network so that each image is tagged.

After this tagging a data table will appear with the images and their tags. That’s it! I am sure there are better visualizations than a data table to show a lot of images. If you have a better idea just adjust my shiny app on GitHub…. 🙂

Using the app, some screen shots

There is a simple interface, specify the number of frames per second that you want to analyse. And then upload a video, many formats are supported (by ffmpeg), like *.mp4, *.mpeg, *.mov

If you have code to improve the data table output in a more fancy visualization, just go to my GitHub.

For those who want to play around, look at a live video analyzer shiny app here.

And Shiny App version using miniUI will be a better fit for small mobile screens.

Cheers, Longhow

Pingback: A “poor man’s video analyzer”… | A bunch of data

Nice post!

FYI, I’m developing a – for now simple – computer vision library for R based on OpenCV. It’s a two packages system, with a first package compiling and installing OpenCV within your R library folder (so it sits in a standardized location, is isolated from the rest of your system, and doesn’t require re-compilation every time a change is made to the wrapper) and a another package wrapping some of OpenCV functionalities into easy to use R functions.

First package (ROpenCVLite): https://github.com/swarm-lab/ROpenCVLite

Second package (Rvision): https://github.com/swarm-lab/Rvision

It’s still in a development phase, but it can read videos and images without requiring an external software like ffmpeg.

LikeLike

Hi Simon,

Interesting, I’ll check both packages. Thanks for mentioning it.

Cheer,

Longhow

LikeLike

Pingback: A “poor man’s video analyzer”… – Mubashir Qasim