Introduction

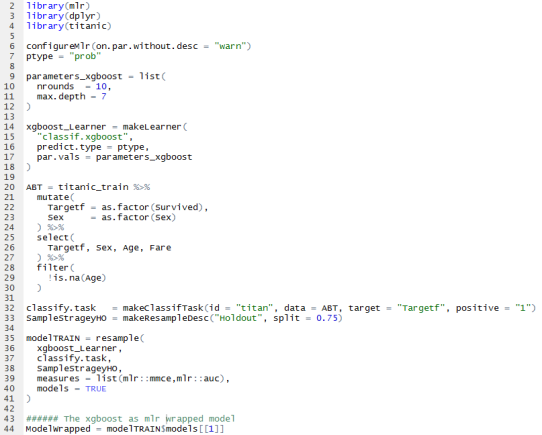

In a previous post I described how easy it is to create and deploy machine learning models (exposing them as REST APIs) with Dataiku. In particular, it was an XGboost model predicting home values. Now, suppose my model for predicting home values becomes so successful that I need to serve millions of request per hour, then it would be very handy if my back end scales easily.

In this brief post I outline the few steps you need to take to deploy machine learning models created with Dataiku on a scalable kubernetes cluster on Google Kubernetes Engine (GKE).

Create a Kubernetes cluster

There is a nice GKE quickstart that demonstrate the creation of a kubernetes cluster on Google Cloud Platform (GCP). The cluster can be created by using the GUI on the Google cloud console. Alternatively, if you are making use of the Google cloud SDK, it basically boils down to creating and getting credentials with two commands:

gcloud container clusters create myfirst-cluster

gcloud container clusters get-credentials myfirst-cluster

When creating a cluster, there are many options that you can set. I left all options at their default value. It means that only a small cluster of 3 nodes of machine type n1-standard-1 will be created. We can now see the cluster in the Google cloud console.

Setup the Dataiku API Deployer

Now that you have a kubernetes cluster we can easily deploy predictive models with Dataiku. First, you need to create a predictive model. As described in my previous blog, you can do this with the Dataiku software. Then the Dataiku API Deployer, is the component that will take care of the management and actual deployment of your models onto the kubernetes cluster.

The machine where the Dataiku API Deployer is installed must be able to push docker images to your Google cloud environment and must be able to interact with the kubernetes cluster (through the kubectl command).

Deploy your stuff……

My XGboost model created in Dataiku is now pushed to the Dataiku API Deployer. From the GUI of the API Deployer you are now able to select the XGboost model to deploy it on your kubernetes cluster.

The API Deployer is a management environment to see what models (and model versions) are already deployed, it checks if the models are up and running, it manages your infrastructure (kubernetes clusters or normal machines).

When you select a model that you wish to deploy, you can click deploy and select a cluster. It will take a minute or so to package that model into a Docker image and push it to GKE. You will see a progress window.

When the process is finished you will see the new service on your Kubernetes Engine on GCP.

The model is up and running, waiting to be called. You could call it via curl for example:

curl -X POST \

http://35.204.180.188:12000/public/api/v1/xgboost/houseeprice/predict \

--data '{ "features" : {

"HouseType": "Tussenwoning",

"kamers": 6,

"Oppervlakte": 134,

"VON": 0,

"PC": "16"

}}'

Conclusion

That’s all it was! You now have a scalable model serving engine. Ready to be easily resized when the millions of requests start to come in….. Besides predictive models you can also deploy/expose any R or Python function via the Dataiku API Deployer. Don’t forget to shut down the cluster to avoid incurring charges to your Google Cloud Platform account.

gcloud container clusters delete myfirst-cluster

Cheers, Longhow.